The Tsubame 3.0 is be installed later this year and is expected to lay the foundation for a new kind of HPC application that brings together simulation and modelling and machine learning workloads.

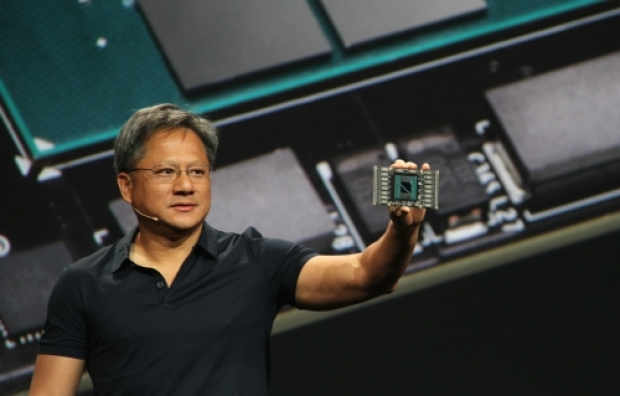

What they are building is what Nvidia has been calling an AI supercomputer.

The Tsubame nodes are based on the latest generation of “Pascal” Tesla P100 accelerators from Nvidia. It has two CPUs and four Tesla P100s and the whole thing is water cooled.

Tsubame 3.0 will have 2,160 GPUs in 540 blades. The CPUs are “Broadwell” Xeon E5-2680 v4 processors, which have 14 cores spinning at 2.4 GHz and which fit in a 120 watt thermal envelope. The low thermal envelope plus the water cooling is how HPE/SGI is able to cram all of this compute into a space that is less than 1U tall in the rack.

Tsubame 3.0 will have 12.15 petaflops of peak double precision performance, and is rated at 24.3 petaflops single precision and, importantly, is rated at 47.2 petaflops at the half precision that is important for neural networks employed in deep learning applications.

When added to the existing Tsubame 2.5 machine and the experimental immersion-cooled Tsubame-KFC system (which brings necessary fried chicken as far as we know) , TiTech will have a total of 6,720 GPUs.

All this means that Nvidia worked with TiTech to get half precision working on Kepler GPUs, which did not formally support it.

The Tsubame 3.0 nodes are being equipped with fast, non-volatile storage, and in this case, each node has 1.08 PB of such storage. We do not know if it is NAND flash or 3D XPoint memory, but it could be either. Each node also has 256 GB of main memory.

Published in

Graphics

More details on Japanese HPC star in Nvidia’s universe

Not a Polaris at all

Details are coming out about the Global Scientific Information and Computing Center at the Tokyo Institute of Technology’s new supercomputer which is based around Nvidia GPUs.

Tagged under