The department's use of the technology gained national attention last week after the American Civil Liberties Union and New York Times brought to light the case of Robert Julian-Borchak Williams, a man who was wrongfully arrested because of the technology.

In a public meeting Detroit Police Chief James Craig admitted that the technology, developed by a company called DataWorks Plus, almost never brings back a direct match and almost always misidentifies people.

"If we would use the software only [to identify subjects], we would not solve the case 95-97 percent of the time. That's if we relied totally on the software, which would be against our current policy ... If we were just to use the technology by itself, to identify someone, I would say 96 percent of the time it would misidentify."

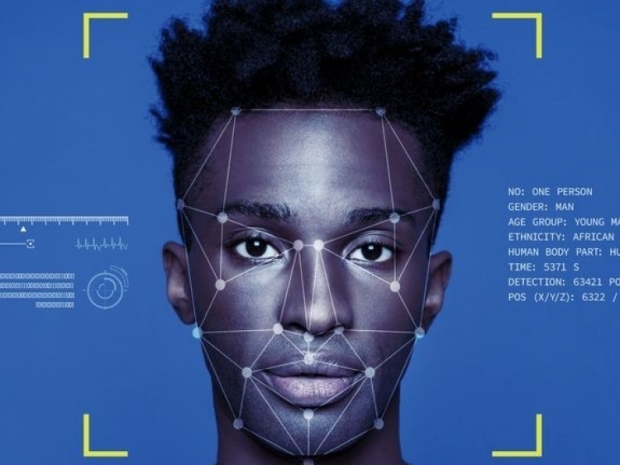

Detroit's police officers are ultimately making the decision to question and investigate people based on what the software returns and a detective's judgment. This means that people who may have had nothing to do with a crime are ultimately questioned and investigated by police. In Detroit, this means, almost exclusively, black people.

So far this year the technology had been used 70 times, according to publicly released data by the Detroit Police Department. In 68 of those cases, the photo fed into the software was of a Black person; in two of the cases, the race was listed as 'U,' which likely means unidentified (in other reports from the police, U stands for unidentified). These photos were largely pulled from social media (31 of 70 cases), or a security camera (18 of 70 cases).

Even when someone isn’t falsely arrested, their misidentification through facial recognition can often lead to an investigator questioning them, which is an inconvenience at best and a potentially deadly situation at worst. After all an innocent person being arrested for questioning on a serious person might not be too happy about it all and get him or herself shot.